Simultaneous Localisation and Mapping (SLAM)

by Jonatan Flyckt and Marcus Gullstrand

This page contains information about a final project from the Embedded and Distributed AI course of the Spring 2020 semester Länk till annan webbplats, öppnas i nytt fönster.. The focus has been developing real-time intelligent algorithms which can run on embedded systems.

Länk till annan webbplats, öppnas i nytt fönster.. The focus has been developing real-time intelligent algorithms which can run on embedded systems.

Simultaneous localisation and mapping (SLAM) can be used to create maps of environments while simultaneously detecting and mapping an agent’s position in these environments through the use of various sensors.

The SLAM problem is of great interest to the robotics community, and SLAM is used by both indoor and outdoor robots, as well as in underwater and airborne systems. In recent years, SLAM has also seen successful use in commercial semi-autonomous vehicles such as Tesla cars. The process of SLAM involves the agent moving through an environment and using one or more sensors to calculate an unknown number of landmarks by observing their relative position between different time frames. Common sensors for SLAM are cameras, Sonars, and Lasers.

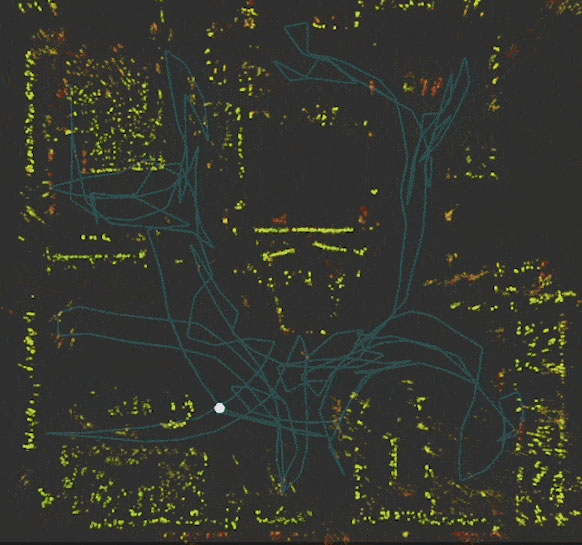

The objective of our project was to create an intuitive and easy-to-use SLAM implementation that could show accurate 2D floor-plan style maps using mainly the camera sensor on mobile Android devices. By using Google’s ARCore library to extract SLAM landmarks, we created an application that continuously plots and updates a 2D map using the Android Canvas. The resulting application works well in well-lit indoor areas, and is a fun and intuitive application that can be used by anyone with a mobile Android device.

For more information you can contact to Jonatan Flyckt and Marcus Gullstrand with the contact information below.

Jonatan Flyckt

Email: fljo1589@student.ju.se

Linkedin: https://www.linkedin.com/in/jonatan-flyckt-85b762153/

Marcus Gullstrand

Email: guma1502@student.ju.se

Linkedin: https://www.linkedin.com/in/marcus-gullstrand-076905146/